Architecture Review

Before and After

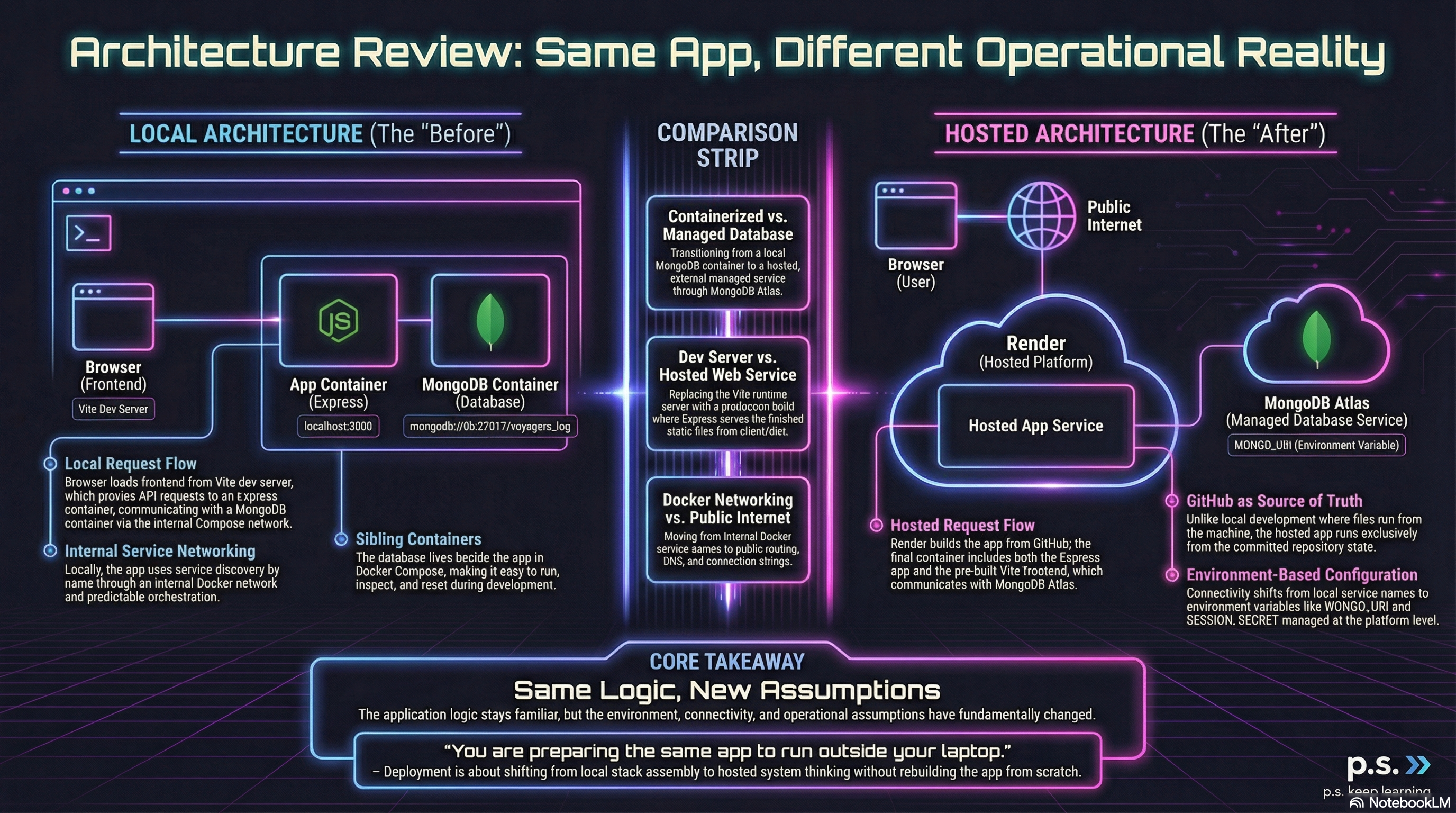

Section titled “Before and After”Voyager’s Log is still the same application, but the environment around it changed quite a bit.

This page is just a quick architecture check:

- what the app looked like locally

- what it looks like after deployment

- what changed

- what stayed the same

Figure 1. Before and After Deployment

Local Architecture

Section titled “Local Architecture”In the local version, the app was split across a few familiar pieces:

- MongoDB running in Docker

- Express running in Docker

- Vite running as a local dev server

Local request flow

Section titled “Local request flow”- the browser loaded the frontend from Vite

- Vite proxied

/apirequests to Express - Express talked to MongoDB through the Compose network

The database connection looked like this:

mongodb://db:27017/voyagers_logThat worked because Docker Compose gave us:

- service discovery by name

- an internal network

- predictable local orchestration

The local stack was great for development because each part was easy to run, inspect, and reset.

Hosted Architecture

Section titled “Hosted Architecture”In the hosted version, the shape is simpler from the outside but more production-like underneath.

Now we have:

- GitHub as the deployment source

- Render running the app container

- MongoDB Atlas as the hosted database

- Express serving both the API and the frontend

Hosted request flow

Section titled “Hosted request flow”- Render builds the app from the repo

- the Dockerfile builds the frontend

- the final container includes the Express app and built frontend

- the browser loads the frontend from Express

- Express talks to Atlas using

process.env.MONGO_URI

In production, the built frontend is served from:

client/distAnd the database connection is no longer a Compose service name. It now comes from configuration:

process.env.MONGO_URI;The Biggest Changes

Section titled “The Biggest Changes”1) The database moved out of the local stack

Section titled “1) The database moved out of the local stack”Locally, MongoDB lived beside the app in Compose.

In deployment, MongoDB became an external managed service through Atlas.

2) Vite stopped being a runtime server

Section titled “2) Vite stopped being a runtime server”Locally, Vite actively served the frontend.

In deployment, Vite only builds the frontend. Express serves the finished files.

3) Configuration matters more

Section titled “3) Configuration matters more”Locally, defaults and shortcuts were easier to get away with.

In deployment, the app depends on correct runtime configuration like:

MONGO_URISESSION_SECRETNODE_ENVPORT

4) GitHub became the source of truth

Section titled “4) GitHub became the source of truth”Locally, the app ran from whatever existed on your machine.

In deployment, the app runs from the committed repository state.

Local vs Hosted

Section titled “Local vs Hosted”| Local | Hosted |

|---|---|

| MongoDB container | MongoDB Atlas |

| Express in Docker | Express in Render |

| Vite dev server | Built frontend served by Express |

| Compose service name networking | Environment-based connectivity |

| Local files | GitHub repo as deployment source |

What Stayed the Same

Section titled “What Stayed the Same”Even though the infrastructure changed, the app itself did not become a different project.

Voyager’s Log is still:

- a public submission app

- an admin-moderated publishing app

- an Express + MongoDB application

- a frontend talking to backend API routes

The data model is also still the same:

- voyage entries

- users

- moderation states such as

pending,approved, andhidden

And the core request flow is still familiar:

- the user interacts with the frontend

- the frontend sends a request to the backend

- the backend reads or writes data

- the frontend updates based on the response

The Main Lesson

Section titled “The Main Lesson”Deployment did not require rebuilding the app from scratch.

What changed was how the app was:

- built

- packaged

- configured

- connected

- hosted

That is the big architectural lesson here.

You are not making a separate “production app.”

You are preparing the same app to run outside your laptop.

Final Takeaway

Section titled “Final Takeaway”The local version taught us how the parts fit together.

The hosted version taught us how those same parts behave in a real deployment environment.

Both mattered.

That is the real shift in this lesson: from local stack assembly to hosted system thinking.

Extra Bits & Bytes

Section titled “Extra Bits & Bytes”AWS: What Is Distributed Computing?

⏭ Dry Dock Drills

Now that the deployment path is complete, the next step is practice: breaking, fixing, and re-shipping the system on purpose.